Google Gemma 4 for Business: Frontier AI on Your Own Hardware, With No Cloud Required

Google Gemma 4 for business might be the most significant AI release of 2026 that most SMEs have never heard of. On April 2, 2026, Google DeepMind released Gemma 4, a family of four open-weight AI models built from the same research as the proprietary Gemini 3.

The headline: a 31B dense model and a 26B mixture of experts model delivering what Google calls “frontier-level intelligence” on a personal computer, with no cloud connection required. Arena Elo scores of 1464 and 1453 respectively, competing with models 20 to 30 times their size. Released under a commercially permissive Apache 2.0 licence. And available for free download right now.

For SMEs, the implications go far beyond another model release. This is the first time a major AI lab has put genuinely frontier-capable AI directly into the hands of businesses without requiring cloud subscriptions, API fees or data to leave the premises. That changes the AI affordability equation entirely.

Google Gemma 4 for Business: What Actually Got Released

Gemma 4 ships in four sizes, each built for a different deployment scenario.

The E2B (Effective 2B) and E4B (Effective 4B) models are built for phones, tablets, and edge devices. They handle text, images, video and audio natively, and support over 140 languages with cultural context awareness. The E2B is the foundation for Gemini Nano 4 on Android devices. These run on consumer hardware, including MacBooks with 8GB of RAM, and deliver 3x faster inference than the previous Gemma generation while consuming 60% less battery on mobile.

The 26B MoE (Mixture of Experts) model is the efficiency breakthrough. It has 26 billion total parameters but activates only 3.8 billion at any given time during inference. The practical result: inference costs roughly one-seventh of a comparable dense model, with reasoning quality that competes with much larger systems. It runs on consumer GPUs with 16GB or more of VRAM (a quantised version runs on even less).

The 31B Dense model is the full-power variant, designed for workstations or cloud deployment. It needs a GPU with 24GB of VRAM such as an Nvidia RTX 4090, or equivalent cloud infrastructure. It currently ranks as the #3 open model in the world on the Arena AI text leaderboard.

Across all four models, you get a 256K context window on the larger two (enough to analyse full codebases or handle long agentic workflows), native tool use built in (the models can plan steps and call tools without extra wiring), and full multimodal capability (text, images, video and audio on the smaller models).

Why Gemma 4 Matters More Than Most Model Releases

Three things make this release different from the usual AI news cycle and all three matter for SMEs.

Frontier Intelligence on Your Own Hardware

The Arena Elo benchmark scores tell the story. Gemma 4’s 31B model scored 1464. The 26B MoE scored 1453. For context, GLM-5 at 754 billion parameters scored 1469. Kimi K2.5 at roughly 1.1 trillion parameters scored 1464. In other words, Google has released open models that match the performance of proprietary systems 20 to 30 times larger.

The practical implication is significant. You no longer need cloud access or enterprise AI subscriptions to run frontier-level AI in your business. A workstation that costs a few thousand pounds can run models that match the quality of systems previously only available through Microsoft, OpenAI or Google Cloud. This fundamentally changes the economics of AI adoption for SMEs.

Apache 2.0 Licensing: Fully Open and Commercial-Friendly

This is the first time Google has released Gemma under Apache 2.0, the most permissive commercial licence in the open source world. You can use the models commercially. You can modify them. You can redistribute them. You can fine-tune them on your own data. You can deploy them in products you sell. There are no monthly active user limits (unlike Meta’s Llama 4), no acceptable use gatekeeping, and no separate enterprise agreement required.

Compare this to the alternatives. OpenAI charges per API call. Anthropic charges per API call. Google’s own Gemini API charges per API call. Azure AI Services charges per API call. Gemma 4, running on your own hardware, costs you the hardware and electricity. Nothing else.

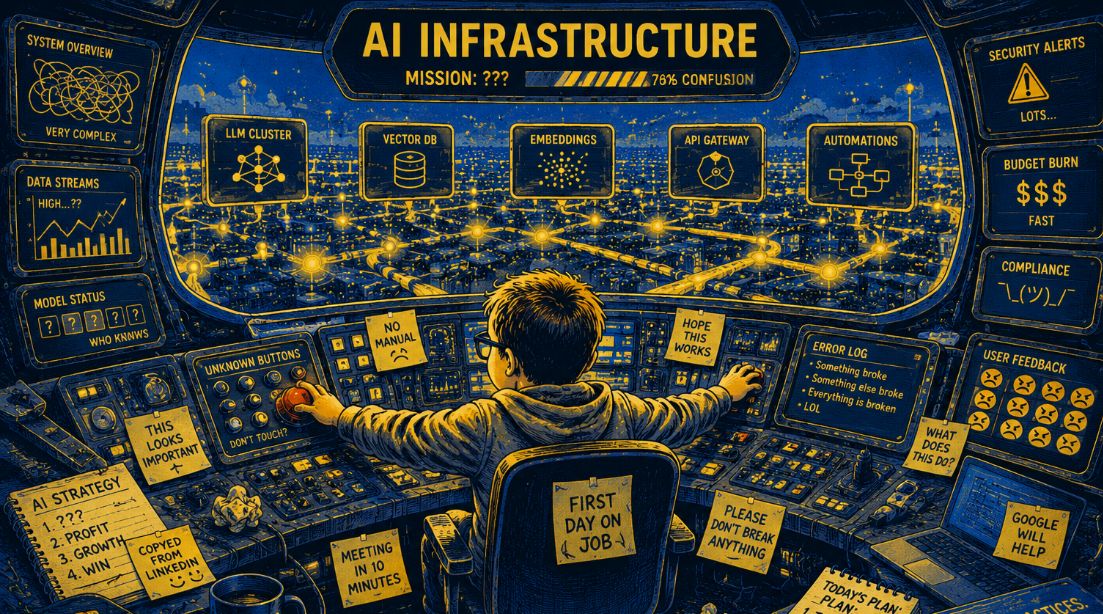

For SMEs evaluating AI costs against the backdrop of the broader AI infrastructure cost crisis covered in our AI infrastructure costs blog, this is a meaningful shift. Not every AI use case needs frontier cloud models. A surprising number can be handled by a well-deployed Gemma 4 instance running locally.

Your Data Stays on Your Machine

Local execution means local data. Every time your business uses ChatGPT, Claude or Gemini API, your prompts and data travel to someone else’s servers, sit on someone else’s infrastructure, and are governed by someone else’s terms. For SMEs handling client data, financial information, legal documents or anything covered by GDPR, this creates real compliance friction.

Gemma 4 changes that. The model runs on your hardware. Your prompts never leave your network. Your client data stays within your security perimeter. This aligns directly with the broader shift toward sovereign AI we covered in our Nvidia AI dominance blog, where the Palantir-Nvidia Sovereign AI Operating System pointed to a future of enterprises running AI on their own infrastructure rather than in someone else’s cloud.

For businesses with AI Compliance concerns, this is not a minor benefit. It is the single most important structural shift in AI deployment options for SMEs in 2026.

What Gemma 4 Is Good For (And What It Isn’t)

The honest answer: Gemma 4 is excellent for a large subset of business AI use cases, and unsuitable for others. Here is the practical breakdown.

Gemma 4 is well suited for document analysis and summarisation, internal coding assistants, agentic workflows involving tool use, image analysis for things like invoice processing or visual quality inspection, customer-facing chatbots with a defined scope, multilingual content generation for international businesses, and fine-tuning on your own data for specialised tasks. The 26B MoE model is particularly strong at coding and maths.

Gemma 4 is less suited for cutting-edge reasoning tasks where absolute quality matters more than cost, use cases requiring the absolute latest training data, or extremely complex multi-step reasoning where the top proprietary models still have an edge. If you are building something where a 5% quality difference translates to significant business value, you may still want Claude Opus 4.6, GPT-5.4 or Gemini 3.1 Pro via their APIs.

The strategic question for SMEs is not “Gemma 4 or nothing”. It is “which use cases in my business are best served by a local open model, and which justify paying for cloud API access?” This is exactly the kind of analysis that belongs in an AI Roadmap rather than guesswork.

The Bigger Picture: Open Source AI Just Caught Up

Gemma 4 sits within a wider shift in the AI landscape that SMEs should understand. A few months ago, the assumption was that frontier AI required the compute resources of OpenAI, Anthropic or Google. That assumption is breaking down.

Meta’s Llama 4 (though under a more restrictive licence) has been closing the gap. Alibaba’s Qwen 3.5 competes across most benchmarks. DeepSeek has repeatedly surprised the industry with efficient open models. And now Gemma 4, under Apache 2.0, puts genuinely frontier-level capability in the hands of anyone with a reasonably powerful laptop.

This matters because it connects to the broader trends we have tracked. Nvidia is building the infrastructure layer that supports all of this. AI World models are creating physical AI capabilities. AI agents are reshaping how work gets done. And the Musk OpenAI lawsuit is about to test whether the industry’s closed, proprietary model even holds up legally.

For SMEs, the practical takeaway is this: the AI landscape in 2026 is not a choice between expensive cloud APIs and nothing. The open model ecosystem is now mature enough that a significant proportion of business AI workloads can run locally, privately and cost-effectively. The businesses that understand this and build accordingly will have meaningful cost and compliance advantages over those that default to cloud-only deployments.

The Bottom Line

Google Gemma 4 for business is a genuine step change. Frontier-level AI intelligence, running on your own hardware, under a permissive commercial licence, with your data staying on your network. Not every use case fits, but many do. And the ones that fit just became dramatically more affordable and compliance-friendly.

The question for SMEs is not whether Gemma 4 is good enough. It is which parts of your business can benefit from local, private, affordable AI, and how to deploy it properly. That starts with understanding your current position and where AI fits your specific operations.

Complete our free AI Readiness Assessment to find out where Gemma 4 and other open models could deliver value in your business, and how to build an AI strategy that balances cost, capability and compliance.